AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

Back to Blog

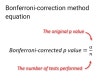

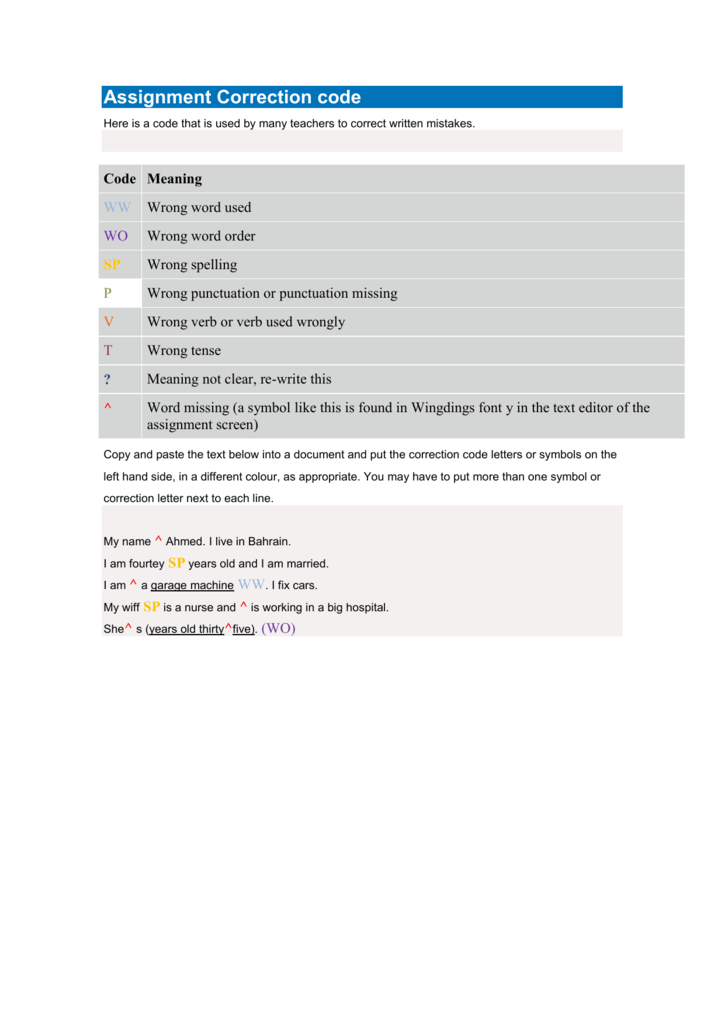

There are two functions in the file pairwise.t.table. The two functions are included the file ( pairwise.t.table.R) and can be loaded into R using the command source as follows: source("pairwise.t.table.R") To do that, I wrote two specialized functions (included in the tutorial) for one and two-way ANOVAS. To my knowledge, there is no standard function in R that produces such a table. Ideally, you would report these values in a table where the observed t-values for each contrast, their original P-values (uncorrected) and corrected are presented (as seen in our lecture). Note that the function produces P-values (corrected) for pairwise (i.e., two at the time) comparisons of means. Pairwise.t.test(circadian$shift, g = circadian$treatment,p.thod="BH") Pairwise.t.test(circadian$shift, g = circadian$treatment,p.thod="bonferroni") pairwise.t.test(circadian$shift, g = circadian$treatment,p.thod="none") Which ones? BH below makes the pairwise t test function to use the FDR procedure (coded as BH because of the original authors, Benjamini & Hochberg). And that the ANOVA was significant, indicating that at least two groups differ in their means. We know from tutorial that the variances of the three groups (treatments) were not significantly different. Let’s load the data: circadian <- read.csv("chap15e1KneesWhoSayNight.csv") Let’s use the “The knees who say night” data from Whitlock and Schluter (2009) as used in tutorial 3 The Analysis of Biological Data. Multiple correction procedures to post-hoc constrasts between group means in a one-factorial design In real situations, most likely, there will be a mix of true positives and true negatives, and p-values are adjustments to optimize their detection, i.e., avoid type I errors (false positives) and type II errors (false negatives). We can also count them as follows: length(which(p.bonf <= 0.05)) Go through these p.values, and you will notice that none of them were considered significant after adjustment even though 50 original p.values were. Let’s view the original and the adjusted p-values: View(cbind(P.values,p.bonf,p.fdr)) Let’s use the Bonferroni and the FDR adjustments for multiple tests to adjusted these p-values: p.bonf <- p.adjust(P.values,method="bonferroni") The issue here is that we ended up with about 50 tests that were significant when the null hypothesis H 0 was true. Pretty close to alpha (0.05), right? If you had run the simulation infinite times, the value in TypeIerror (i,e,m false positives) would had been exactly equal to 0.05, i.e, 5% of tests rejected H 0 when H 0 was correct. TypeIerror <- length(which(P.values<=0.05))/n.tests Let’s consider 1000 data sets based on the population we described above. Here we will use again the function lapply and sapply to run the same analysis across multiple data. Now let’s repeat the sampling multiple times and count how many times the null hypothesis H 0 was rejected (i.e., how many times the ANOVA P-value was smaller than alpha). We can then to run an ANOVA in the simulated data: Data.simul F)"Īs expected, given that all samples came from the same population, likely (assuming an alpha = 0.05), the null hypothesis wasn’t rejected (i.e., P-value > alpha). Let’s then sample observations at random from that population and divide them into 10 groups (with 7 observations each). Assume that the statistical population mean is 10 and its standard deviation is 3. Let’s assume a one-way ANOVA design with 10 groups (treatments) in which the null hypothesis H 0 is true in other words, all samples come from populations with the same mean. Understanding the issues of inflated Type I error when conducting multiple tests * NOTE: Tutorials are produced in such a way that you should be able to easily adapt the commands to your own data! And that’s the way that a lot of practitioners of statistics use R! Also consider using the Forum for tutorials with you have any specific questions along the way.Ī good way to work through is to have the tutorial opened in our WebBook and RStudio opened beside each other. Therefore you should attend tutorials and/or work on them weekly on your own time.

Note though that reports and midterm 2 are heavily based on tutorials and your knowledge of R. REMEMBER: There are no reports or grades for tutorials. Janu(4th week of classes) The Multiple Testing Problem & Adjustment Procedures Lecture 21: Multivariate Response Models.Tutorial 8: Heteroscedasticity and GLMs.Lecture 14: A new look into Heteroscedasticity.Tutorial 7: Multiple regression in practice.Tutorial 5: Rank-transformation and Heteroscedasticity.Lecture 4: Estimators and Factorial ANOVA.Tutorial 2: Statistical Hypothesis Testing.Lecture 2: Statistical Hypotheses Testing.

0 Comments

Read More

Leave a Reply. |

RSS Feed

RSS Feed